Prospect sourcing via APIs: a clean outbound list process

Prospect sourcing via APIs made practical: pull leads from providers, dedupe, validate emails, segment by fit, and prep lists before sending.

Why raw API leads often turn into a messy outbound list

Prospect sourcing via APIs feels like getting fresh leads straight from the source. In practice, API results are usually a mix of perfect matches, near-misses, and records that look fine until you start sending.

The fastest way an outbound list goes bad is simple: bad emails. Providers often return guesses, catch-all domains, role inboxes (like info@), or addresses that used to work. If you send to them, bounces climb. Enough bounces and inbox providers start treating your next emails as risky, even if your message is solid.

Duplicates are the other quiet problem. The same person can show up twice because they changed jobs, have two emails, or appear in multiple datasets you pulled. Double-sending is wasteful and can trigger spam complaints when someone gets the same pitch again from the same brand. Near-duplicates (same company, same name, slightly different title) lead to repeat outreach that makes you look sloppy.

Old records hurt more than most teams expect. People change roles, companies get acquired, and email formats change. You end up pitching the wrong person, at the wrong company, with the wrong context. That drives low replies, more negative replies, and fewer real opportunities.

List quality beats list size for cold email because every send is a small trust test with inbox providers and recipients. A smaller list that is accurate and relevant usually performs better than a huge list full of maybes.

A clean outbound list is four things: accurate (details are correct enough to personalize), unique (one record per real person), reachable (email is likely to deliver), and relevant (fits your ICP and exclusions).

Set your rules first: ICP, required fields, and exclusions

Before you pull a single record, decide what a good lead means for you. Prospect sourcing via APIs is fast, and speed is how teams end up emailing the wrong roles, missing key info, or hitting blocked regions.

Start with your ICP in plain words. Don’t write a long document. Write one short sentence you can apply while scanning rows: industry, company size, role level, and region. “US and Canada B2B SaaS, 20 to 200 employees, founders and heads of sales” is clear. “Tech companies” isn’t.

Next, define the fields that must be present for a lead to be usable. Many teams ask for everything, then wonder why half the list is incomplete. You usually only need a few essentials to send a decent first message and route replies correctly.

Write down rules everyone will use:

- ICP filters (industry, headcount range, target titles, regions)

- Required fields (first name, company name, role/title, company domain, email or a clear way to get it)

- Exclusions (competitors, current customers, past opportunities, blocked regions)

- Unique ID (how you recognize the same person again)

- Success metrics (bounce rate, positive reply rate, meetings booked, with targets)

A quick example: you pull 5,000 records from a provider API. Without exclusions, you can email existing customers and get complaints. Without a unique ID, the same VP of Sales can appear three times from different sources and get three sequences.

Metrics matter because they guide stop rules. For many teams, a bounce rate that creeps up is the earliest warning that list quality or validation is slipping.

Pick data providers and understand what their API gives you

Your outbound list will only be as good as the data provider behind it. Start with one primary provider that matches your market (industry, geography, company size). Add a backup provider only if coverage is thin. Using two providers from day one often creates more duplicates and mixed data quality than you expect.

When you evaluate a provider, look past the headline “contact database” and focus on what the API actually returns. Some APIs give a direct email. Others return a likely email with a confidence score. Some include a last-seen or last-verified date, others don’t. Many return multiple possible emails with different sources. Those details drive what you keep, what you validate, and what you quarantine.

Be realistic about rate limits and pagination. API pulls rarely look like “give me 10,000 records.” You’ll usually request pages (say 100 at a time) and you may be capped per minute or per day. Build the workflow around smaller pulls you can retry safely, not one giant job that fails halfway.

Capture metadata every time you pull. This is what lets you debug bad lists and prevents mystery records that no one trusts:

- Provider name and endpoint used

- Query/filters and exact timestamp

- Provider record ID (and any company ID)

- Confidence, source, and last-updated fields

- Who or what system ran the pull

Example: you pull 2,000 leads via a data provider API workflow (for example, Apollo via API) for “US SaaS companies, 11-50 employees.” A week later, you run a similar pull with slightly different title filters. Without metadata, you can’t explain why the same person appears twice with two different emails, or which record is newer.

Finally, keep provider terms and privacy rules in mind. Even if you’re not a lawyer, you should understand basics like what you can store, how long you can keep it, and whether certain fields are restricted.

Ingest and normalize the data before you clean it

Before you dedupe or validate emails, make sure the data you pulled is shaped the same way. Most issues in prospect sourcing via APIs start here: different providers label fields differently, use different formats, and return messy company and role info.

Start with small batches. Pull 50 to 200 records first, then scan them like a human would. Are the company websites real? Do job titles look like what you target? Do you see junk like “N/A” names or missing countries? It’s faster to catch a bad source or wrong query now than after you’ve processed 10,000 rows.

Normalization means choosing one consistent format for each key field. One provider might return “Acme, Inc.” and another returns “ACME Incorporated.” If you don’t normalize, you’ll miss duplicates later and you might send two sequences to the same company.

A practical normalization checklist

Aim to end up with one clean schema you always use, even if the incoming APIs differ.

For companies, keep a consistent name and a canonical domain. Trim extra spaces, standardize casing, and consider removing legal suffixes (Inc, LLC) in a separate “normalized” field while keeping a display name for personalization.

For websites, extract one canonical domain (example.com), remove paths and tracking parameters, and force lowercase. For job titles, map common variants into the groups you’ll actually segment on (for example, “Head of Sales”, “Sales Lead”, “VP Sales” -> “Sales leadership”).

Standardize location and industry too. Pick one country/state format and one industry taxonomy. And always keep IDs and timestamps: provider record ID and the time you pulled it are your audit trail.

Missing fields need rules, not guesses. If the domain is missing but a company name exists, put the record into a research or enrichment queue. If the email is missing, treat it as incomplete and either enrich, generate, or drop it based on policy.

Store two versions: the raw payload as received, and the normalized record you’ll actually process. That separation makes it easier to trace why “John Smith at Example” changed domains, or why a title got grouped differently.

Deduping: how to avoid double-sending and repeat outreach

If you do prospect sourcing via APIs, duplicates aren’t a maybe, they’re guaranteed. One person can show up from two providers. The same company can appear under slightly different names. A contact can be updated mid-week with a new title or email.

Start with deduping inside a single pull. Use hard keys first (exact email, provider person ID). Then add softer checks for the cases APIs create: same full name plus same company domain, or the same LinkedIn URL if you have it. Also dedup at the domain level when it matches your approach (for example, if you only want one contact per small company).

Next, dedup across past pulls. The goal is simple: don’t email the same person twice unless you intentionally planned a new cycle months later. Keep a history table of every contact you’ve ever queued or sent to, keyed by email and any stable IDs you store. When a new batch comes in, compare it to that history before it touches a sequence.

When duplicates disagree, pick the best record instead of deleting both. A practical rule: newest wins for job title and company, most complete wins for missing fields. If one record has a verified email and the other has stronger firmographic data, merge them into one contact.

A short checklist that prevents most repeat outreach:

- Dedup by exact email, then by stable person ID, then by name + company domain.

- Compare against your full sending history, not just the last import.

- Merge fields and keep the most recent timestamp when data conflicts.

- Maintain suppression lists for do-not-contact, unsubscribes, bounces, and “not interested”.

- Log removals with a clear reason (duplicate, suppressed, missing required fields).

That last point matters. When a teammate asks “why wasn’t this lead uploaded?”, you want a one-line answer.

Email validation: reduce bounces before they happen

Bounces aren’t just a data problem. They can hurt your sender reputation fast, especially when you’re scaling prospect sourcing via APIs and the list grows every day.

Separate two different checks:

- Syntax check: does this look like an email address?

- Mailbox check: can this mailbox actually receive mail?

Syntax is quick and cheap, but it won’t catch dead inboxes, blocked domains, or catch-all setups. Mailbox checks are slower and sometimes uncertain, but they reduce real bounces.

Validate the domain before you validate the person. If the domain can’t receive email, no contact-level check will save it. At minimum, check for MX records, disposable or temporary domains, and catch-all behavior (where every address looks valid, even fake ones).

Treat validation results as a decision tool, not a simple pass/fail. One practical way to bucket outcomes:

- Keep: valid domain, mailbox confirmed

- Fix: obvious typos, wrong formatting, missing parts

- Discard: invalid domain, hard fail mailbox, disposable domain

- Hold: catch-all, unknown, or rate-limited results

- Do not send: risky patterns (role inboxes like info@, recently created domains)

Protect deliverability by defaulting to “no send” for Hold and Do not send. If you want to test them, do it in a small, separate campaign with lower volume.

Track the validation date. Lists go stale quietly as people change jobs and domains expire. Add a rule like: re-validate any record older than 30 to 60 days before importing into your sending setup.

Segmenting: turn one list into targeted mini-lists

After prospect sourcing via APIs, segmentation is where a big list becomes a set of small, clear targets. Each segment should change what you say, when you send, or how hard you push.

Start with segments that actually affect the message. “Marketing” is too broad. “VP Marketing at B2B SaaS hiring SDRs” is specific enough to write a different opener and pitch. Useful drivers are role (what they own), pain (what they likely struggle with), triggers (what changed recently), and region (timing and norms).

A simple way to keep segments honest is a tiny scoring system: one score for fit, one for data quality. Fit decides who you want. Data quality decides who you can safely email.

- Fit score (0-5): role match, company size match, industry match, trigger present

- Data quality score (0-5): verified email, recent activity date, complete name/title, company domain matches

- Action rule: high fit + high quality goes first; high fit + low quality goes to enrichment; low fit gets deprioritized

Timing matters as much as copy. Split by time zone and language so your sends land during normal work hours and your writing sounds natural. Even a simple split like “North America vs Europe” and “English vs non-English” can lift replies and reduce spam complaints.

Also separate truly new prospects from re-engagement prospects. Re-engagement needs a softer start and usually lower volume, because older data is more likely to bounce or hit people who already said “no” elsewhere.

Step-by-step workflow from API pull to ready-to-send list

Prospect sourcing via APIs works best when you treat it like a small data pipeline: keep raw data untouched, then produce a clean send list you can trust.

A practical workflow you can repeat

Start by writing down your ICP and the filters you’ll use every time. Keep it strict. Example: “US B2B SaaS, 10-200 employees, Head of RevOps or Sales Ops, excludes agencies and current customers.” Decide minimum fields too (name, title, company, company domain, email or email pattern, location).

Run the pull and store the raw output as-is. Don’t edit it in place. You want to re-check provider data later when something looks off.

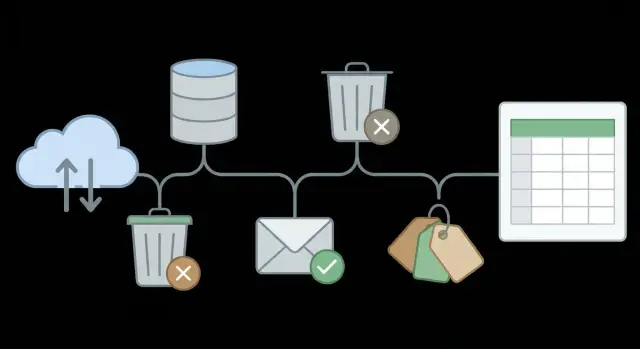

Here’s a repeatable sequence:

- Query + pull: call the provider API with your filters, and capture the full response (including IDs and timestamps).

- Normalize: standardize key fields (job titles, countries, company names) into consistent casing and formatting.

- Fix company domains: pick one canonical domain per company (no tracking domains, no country variants unless you target by region).

- Dedup + suppress: remove duplicates by email first, then by person + company, then by company domain. Apply suppression (unsubscribes, do-not-contact, past hard bounces, existing customers, current opportunities).

- Validate + risk filter: validate emails, then drop or quarantine risky ones (invalid, disposable, role accounts like info@, and catch-all if your policy treats it as high risk).

After that, score and segment so you send in small, focused batches. Keep “VP+” separate from “Manager,” or “high intent” separate from “cold,” so your messaging stays relevant.

Common mistakes that quietly hurt deliverability and results

Small data choices can snowball into bounces, spam placement, and low reply rates. The goal isn’t to pull more records. It’s to pull records you can trust, trace, and target.

Data mistakes that look harmless

A common issue starts when teams use two or three data providers but never decide how a lead is uniquely identified. Provider A might call a company “Acme Inc” while provider B calls it “ACME,” and you end up emailing the same person twice. Pick a shared ID approach early (for example: normalize company domain + email, and keep each provider’s original IDs in separate fields).

Skipping suppression is another problem that shows up late. If you don’t apply your unsubscribe list, past hard bounces, and internal do-not-contact notes every single time, you will re-add people. That’s a fast way to hurt sender reputation.

Job titles are messy too. Titles like “Head of Growth,” “Growth Lead,” and “VP Growth” can describe similar roles, but filters treat them as different. Without title and seniority normalization, you target the wrong level and results look random.

Mistakes that usually surface when it’s harder to fix them:

- No consistent unique ID plan across providers, so dedupe fails

- Suppression lists not applied at import time

- Title and seniority used as-is, without normalization rules

- Unvalidated emails sent “as a test,” then bounces spike

- Very different segments mixed into one sequence and one message

Process mistakes that break learning

Mixing segments is a big one. If you put founders, IT managers, and recruiters into the same sequence, the copy fits nobody. Replies get noisy and you won’t know what to improve.

Record the pull date and query details every time. If you can’t answer “when did we pull this, from where, and with what filters?”, you can’t debug later. If bounce rate jumps this week, those details are how you figure out whether the issue came from a new provider, a new query, or a change in validation.

Quick checks and next steps before you launch outreach

Before you hit send, do a fast sanity pass on your outbound list. This is where prospect sourcing via APIs either turns into predictable meetings or into bounces, spam flags, and awkward double-touches.

Confirm the basics are truly done:

- Required fields are present (name, company, role, email, source, and at least one personalization hook)

- Dedup is complete (same person, same company, common variants)

- Email validation is complete and stored (valid, risky, invalid, unknown)

- Suppression is applied (unsubscribes, do-not-contact, past bounces, internal exclusions)

- Segments are labeled (ICP tier, industry, role, region, use case)

Then spot-check 20 records by hand across segments, not just the top of the file. Ask two questions: do the roles match who you actually sell to, and do the companies fit your ICP (size, location, industry, exclusions)? If you find obvious mismatches in 3 to 5 out of 20, pause and fix the rules before you scale.

Next, look at the size of your bounce-risk buckets. If risky or unknown emails are more than a small slice of the list, separate them into their own segment. Send to the cleanest segment first, and hold the risky segment until you can enrich or re-validate.

Launch with small batches

Even with a clean list, ramp up gradually. Start with a small batch, watch bounces and replies for a day or two, then increase volume. This gives you time to catch problems like a broken field mapping or a provider glitch.

Make it repeatable

Once your list process is stable, the operational side gets easier when your sending stack is centralized. For example, LeadTrain (leadtrain.app) combines domains, mailboxes, warm-up, multi-step sequences, and AI-powered reply classification in one place, which helps teams run outbound without juggling several separate tools.

FAQ

What’s the simplest way to define an ICP before pulling leads from an API?

Start with one sentence you can apply while scanning rows: industry, headcount range, role level, and region. If you can’t quickly say “yes or no” to a record, tighten the rule until you can.

What fields should be “required” before a lead can go into a cold email sequence?

Default to the minimum you need to send a relevant first email: first name, company name, job title, company domain, and a deliverable email (or a clear path to get one). If any of those are missing, route the record to enrichment instead of guessing.

What metadata should I save with every API pull?

Store the provider name, the exact filters you used, the timestamp, and the provider’s person/company IDs. That audit trail is what lets you explain duplicates, trace bad emails, and reproduce a good pull later.

What does “normalizing” API lead data actually mean?

Normalize before you clean so your dedupe and validation rules work consistently. The practical goal is one canonical company domain, clean company and person names, standardized countries, and titles mapped into the categories you actually target.

How do I dedupe leads so I don’t double-send to the same person?

Start with hard matches like exact email and provider person ID, then add soft matches like full name plus company domain (or LinkedIn URL if you have it). Also check against your full send history so you don’t re-email someone who was already contacted last month.

What should I do when two duplicate records disagree on title or email?

Keep one record and merge. Use the newest data for time-sensitive fields like title and company, and keep the most complete set of fields for everything else while preserving the original source and timestamps so you can audit changes.

Are catch-all domains safe to send to after email validation?

Treat catch-all as “unknown risk,” not as valid. The safest default is to hold catch-all addresses out of your main campaign and only test them in a smaller, lower-volume batch after you’ve proven deliverability is stable.

What should be on my suppression list before I upload leads?

Apply suppression at import time every single run, not later. That includes unsubscribes, do-not-contact notes, past hard bounces, current customers, and active opportunities so they never enter a sending queue by accident.

How should I segment an API-sourced list so the emails feel targeted?

Segment by things that change your message or timing, like role responsibilities, company size tier, region/time zone, and any clear trigger. If a segment wouldn’t change your opener or pitch, it’s probably not a useful segment.

How do I safely ramp up sending volume after building a clean list?

Start small, watch bounce rate and reply quality for a day or two, then ramp volume gradually. If you want one place to manage the sending side, LeadTrain centralizes domains, mailboxes, warm-up, sequences, and reply classification so the workflow stays consistent as you scale.