Outbound market testing: validate segments before you build

Use outbound market testing to compare verticals by real conversations, measure reply quality, and pick the best segment before building new features.

The problem: choosing a segment based on guesses

Picking a customer segment is one of the most expensive early decisions you’ll make. If you build features, pricing, and a sales story for the wrong group, you don’t just waste dev time. You end up with a product that feels slightly off to everyone, and fixing that later is slow.

A common trap is choosing a segment because it sounds big, familiar, or easy to reach. Another is copying a competitor’s target without knowing if those buyers will talk to you, trust you, or pay.

Surveys and friendly interviews can mislead, even when people mean well. Many answers are opinions about a hypothetical product, not signals of real intent. People also say “yes, I’d use that” to be polite, and they describe problems differently than how they actually buy.

Outbound market testing helps because it replaces opinions with behavior. Instead of asking what someone might do, you run small, controlled outreach to see who engages when the offer is clear and the next step is simple.

A short outbound test won’t tell you everything. It won’t prove product-market fit or predict long-term retention. But it can quickly show which verticals produce real traction and which ones go nowhere.

When people say “conversation quality,” they mean signs of buying energy, not just activity. Look for replies that do things like:

- Confirm the problem in their own words

- Ask about price, timing, or requirements

- Share real details (tools, process, constraints)

- Commit to a next step with specifics

- Redirect you to the right person instead of brushing you off

If one segment gives you vague replies while another leads to detailed questions and clear next steps, that difference is often more valuable than any click or open rate.

What you’re testing (and what you’re not)

Outbound market testing isn’t about proving product-market fit. It’s about reducing risk before you spend months building for the wrong people. You’re trying to learn which group is most likely to have a real conversation, using a small, controlled campaign.

A quick way to keep terms straight:

- Segment: a broad group with shared traits (for example, “B2B services”)

- Vertical: an industry slice inside a segment (for example, “accounting firms”)

- Persona: the role you email (for example, “owner” vs “operations manager”)

The core rule: change one thing at a time. If you’re comparing verticals, keep persona, offer, and sending setup consistent. Otherwise you won’t know what caused the result.

What a testable hypothesis looks like

A useful hypothesis is specific, measurable, and tied to a decision.

Example: “If we email operations leaders at small logistics companies, we’ll get more qualified replies than from operations leaders at small property management firms, using the same message and lead quality.”

That’s better than: “Logistics seems promising.”

Think in terms of expected behavior, not opinions. You’re testing whether a group:

- Understands the problem quickly

- Admits they have it

- Asks follow-up questions that hint at budget, urgency, or authority

What you’re not testing yet

You’re not validating your final positioning, pricing, onboarding, or retention. You’re also not validating every feature. A strong outbound signal can still fail later if the product can’t deliver.

Treat this as a filter: “Which door is worth opening next?” Deeper validation (calls, trials, pilots) comes after.

Set a clear pass/fail rule before you send

Decide what “good” looks like in advance, and base it on conversation quality, not vanity metrics.

For example:

- Pass if at least 8% of delivered emails get a human reply, and at least 30% of those replies are “interested” or “tell me more”

- Fail if most replies are “wrong person,” “not relevant,” or polite deflections

The important part is the rule. You should be able to choose a vertical based on the outcome, not your gut.

Design a small controlled experiment

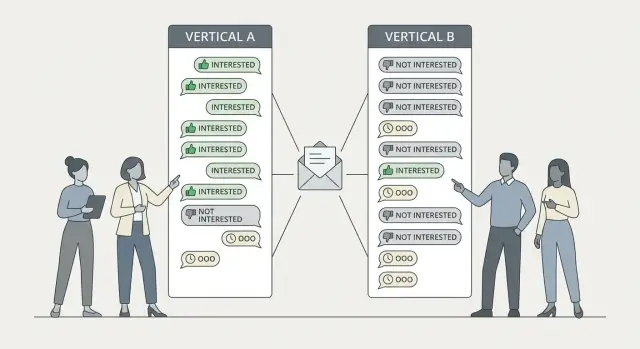

A controlled experiment is the simplest form of outbound market testing: you change one thing (the vertical) and hold everything else as steady as you can. Then, when one vertical “wins,” you can trust it was the segment, not a different offer or a better-written email.

Start with 2 to 4 plausible verticals you can describe in one sentence each. Avoid comparing verticals that would require totally different products or price points, because you’ll end up rewriting the pitch to fit each one.

Keep your offer identical across verticals: same outcome, same call to action, same structure. You can swap a couple of words to sound native (for example, “patients” vs “customers”), but don’t change the core promise.

Pick one primary metric before you send. A solid default is:

- Qualified positive replies per 100 emails sent

Where “qualified” means they have the problem and have authority (or can introduce you to the decision-maker).

A clean experiment setup:

- 2 to 4 verticals

- 80 to 150 prospects per vertical

- One offer, one CTA, one sequence structure

- Send in a randomized order so one vertical doesn’t get “better days”

- Time-box to 7 to 14 days, then stop and review

Define your reply buckets up front so you don’t move the goalposts mid-test. Keep it simple:

- Qualified yes: asks for a call, pricing, or concrete next steps

- Qualified maybe: interested, but needs timing or a bit more detail

- Not qualified: wrong role, wrong company type, or no clear pain

- Neutral noise: out-of-office, unsubscribe, bounce

How to build comparable lead lists

If your lead lists are uneven, your results will be uneven. The goal is fairness: each vertical gets a real shot, so differences come from the market, not from list quality.

Start by writing a short “ideal customer” checklist for each vertical. Use criteria you can verify from a profile or website, not vague guesses like “companies that value innovation.”

Use the same structure for every vertical:

- One company size band (for example, 11-50)

- One geography or time zone

- 2 to 3 job titles

- One clear trigger (hiring, a known tool, a specific service)

- Exclusions (for example, agencies, freelancers, or enterprise)

Build small lists with the same number of leads per vertical. If you can only source 80 good leads in one vertical but 300 in another, cap both at 80. This keeps comparisons clean.

Keep seniority comparable. If one list targets founders at 1-10 employees and the other targets VPs at 200+, you’re testing seniority and budget as much as the vertical.

Finally, tag every lead so you can read results without guesswork. At minimum, you should be able to filter replies by:

- Vertical

- Persona

- Company size band

- List source

- Variant (A/B)

Write messages that isolate the vertical effect

If your goal is market testing, your emails need to behave like a lab test. The easiest way to ruin the result is to write completely different messages for each vertical. Then you don’t learn which segment is better, you learn which copy was better.

Start with one email skeleton and keep it identical across verticals: same length, tone, CTA, and number of follow-ups. The only change should be a small vertical-specific line that proves relevance.

A simple skeleton:

- One line showing you picked them for a reason (the vertical-specific line)

- One line naming the problem you solve (keep wording stable)

- One line of proof (a generic result or credibility line)

- One easy ask they can answer in a reply

That first line is your single variable.

Example first lines:

- For dentists: “Noticed you offer same-day implants - do you still rely on referrals for most new patients?”

- For accountants: “Saw you handle cross-border filings - are you taking on new recurring clients this quarter?”

Keep the ask reply-first, not meeting-first. An early segment test needs conversations. Good asks include:

- “Worth a quick chat?”

- “Should I send a 2-sentence idea?”

- “Are you the right person for this?”

Keep the sequence short. Two to three follow-ups is plenty:

- Follow-up 1 (2-3 days later): restate the ask in one line

- Follow-up 2 (4-6 days later): add one extra detail or tiny example

- Optional bump (a week later): “Should I close the loop?”

Run the campaign without deliverability noise

If your emails land in spam, your test is broken. You’re no longer comparing verticals, you’re comparing inbox placement. Treat deliverability like lab conditions: stable and consistent across groups.

Warm up every mailbox before the test. New domains and fresh inboxes need time to build a reputation. If one vertical is sent from a warmed mailbox and the other isn’t, any difference in replies is meaningless.

Keep sending volume and timing consistent:

- Split daily sends evenly across verticals

- Send on the same weekdays and within the same time window

- Avoid sudden spikes in volume

- Keep subject line style consistent

Watch bounces and unsubscribes like warning lights. High bounces usually mean list quality issues and can damage future sends. A spike in unsubscribes often means your targeting or relevance is off, even if open rates look fine.

If both verticals get the same sending conditions, any gap you see in reply quality is much more likely to be real.

Measure conversation quality, not vanity numbers

A high reply rate can fool you. One vertical might reply a lot just to say “not interested,” while another replies less but shows real intent. Treat replies as data you still need to interpret.

Start by sorting every reply into simple buckets:

- Positive: shows interest or asks a real question

- Neutral: polite but non-committal (for example, “send details”)

- Negative: clear rejection or annoyance

- Out-of-office: ignore for quality scoring

- Bounce/unsubscribe: list hygiene and deliverability signals

Then score the positive and neutral replies for “buyer language”:

- They describe a specific pain in their own words

- They mention tools, workflow, or constraints that suggest fit

- They hint at budget or authority (pricing, procurement, decision-makers)

- They give timing (this month, next quarter, after hiring)

- They propose a next step (call, intro, sharing data)

Don’t stop at replies. Track meetings booked (or qualified demos) per 1,000 emails sent. A vertical with fewer replies but more meetings is often the better bet.

Also compare objection patterns by vertical. If one group repeatedly says “we already have a vendor” or “we don’t do outbound,” that’s not just a one-off objection. It’s a segment-level warning.

Common mistakes that ruin the results

Market testing needs discipline. If you mix variables, you stop learning and start guessing.

The big ones:

- Changing too many things at once: different offer, different email length, different sender name

- Unequal lead quality: one list is clean and targeted, the other is messy and stale

- Declaring a winner too early: a handful of replies is noisy

- Ignoring negative signals: high unsubscribes, angry replies, spam complaints

- Deliverability differences: one vertical sent from a warmed mailbox, the other from a new one

A practical habit: track a few red flags alongside your positive metrics (bounce rate, unsubscribe rate, spam complaints, and “not relevant” replies from the right persona).

If you avoid these mistakes, results tend to feel boring in the best way: clear enough to act on.

A realistic example: picking between two verticals

Say you have a product idea that helps teams reduce time spent on follow-ups. You’re torn between selling to marketing agencies or to B2B SaaS companies. Instead of debating for weeks, you run a test with one rule: keep everything the same except the vertical.

You use the same offer for both groups: a 15-minute call where you share a simple follow-up workflow and a template they can copy. The goal isn’t to close on the first email. The goal is to see which segment produces better conversations.

You build two campaigns: 100 agencies and 100 SaaS companies. You keep the same domain setup, schedule, and sequence steps. Only the opening line changes to signal “this is for you.”

After a week, open rates look similar. Clicks don’t matter here. Replies do.

You notice a pattern:

- Agencies reply more often with curiosity, but many conversations drift into “it depends.”

- SaaS replies are fewer, but the best ones are sharper and easier to qualify.

So you choose based on the traction you want next. If you want faster qualification and a clearer path to a repeatable pitch, you might prioritize SaaS. If you want more back-and-forth discovery and quicker early meetings, you might prioritize agencies.

The win isn’t “agencies vs SaaS.” The win is letting real conversations place your next bet.

Quick checklist and next steps

Treat this like a lab test, not a sales sprint. If you change too many things at once, you won’t know whether the vertical is good or the setup was just louder.

Before you send:

- Write one hypothesis per vertical

- Keep the offer the same across verticals

- Keep list size and filters consistent

- Pick one primary metric and define pass/fail in advance

- Set a minimum sample so you don’t overreact to a handful of replies

A strong default primary metric is conversation quality: the share of replies that include a clear problem, a request for details, or willingness to meet. Put everything else (opens, clicks, polite rejections) in the notes.

After the test, do the next obvious move: scale the winner and narrow your ICP. Keep the vertical, then refine one dimension at a time (job title first, then company size, then triggers). Only then adjust the offer or pricing.

If you want to keep the mechanics consistent, a single platform like LeadTrain (leadtrain.app) can handle domains, mailboxes, warm-up, multi-step sequences, and reply classification in one place, so your results reflect the market rather than a messy setup.

FAQ

What is outbound market testing, in plain terms?

Outbound market testing is a small, controlled outreach experiment to see which segment or vertical creates real conversations when the offer is clear. It helps you stop guessing and start using behavior as your signal before you build a lot of product for the wrong people.

What should outbound market testing tell me (and what shouldn’t it)?

It’s about reducing early risk, not proving product-market fit. A good test tells you which group is worth deeper discovery next, like calls, pilots, or a focused MVP.

What’s the simplest “controlled experiment” setup for comparing segments?

A simple default is to compare verticals while keeping persona, offer, and sending setup the same. If you change too many things at once, you won’t know whether the vertical won or the copy, list quality, or timing did.

What metric should I use if I care about “conversation quality”?

Use a primary metric tied to intent, like qualified positive replies per 100 emails sent. Opens and clicks can be noisy; a vertical that replies less but asks concrete questions often beats a vertical that replies a lot with polite brush-offs.

How do I set a pass/fail rule before I run the test?

Pick a rule you can act on, such as a minimum human reply rate and a minimum share of replies that show real interest. The key is deciding the rule before you send so you don’t talk yourself into a winner after the fact.

How do I build lead lists that make the test fair?

Make each list comparable by using the same company size band, geography or time zone, and similar seniority for the role you email. Cap the list size to the smallest usable vertical so you’re comparing like with like, not “better data” versus “worse data.”

How do I write messages that isolate the vertical as the only variable?

Use one email structure across all verticals and only swap a small relevance line at the top. If you rewrite the whole pitch per vertical, you learn which email was stronger, not which market is more responsive.

How do I avoid deliverability problems ruining my results?

Warm up mailboxes first and keep volume and timing steady so inbox placement doesn’t skew results. If one vertical gets more spam-folder placement, the comparison becomes meaningless even if your targeting is perfect.

What do bounces, unsubscribes, and negative replies mean during a test?

Treat bounces as a list quality problem and unsubscribes as a relevance warning. If a vertical triggers lots of “not relevant” or high unsubscribes, that’s often a segment-level signal, not just bad luck.

After I pick a winning vertical, what’s the next step?

Use the winner to narrow your ICP one dimension at a time, usually job title first, then company size, then triggers. Only after you have a clear, repeatable conversation should you start making bigger changes to the offer, pricing, or positioning.