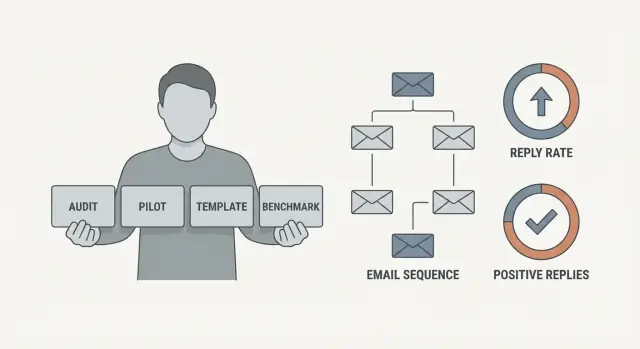

Cold email offer testing: audit, pilot, template, benchmark

Cold email offer testing framework to compare audit, pilot, template, and benchmark offers fairly, using the same list quality and metrics.

Why test the offer, not just the subject line

A subject line can win the open, but it rarely wins the reply. Most cold emails die after the open because the offer is unclear, feels risky, or simply isn't worth someone’s time.

The offer is the real decision: “Do I want this enough to respond to a stranger?” You see that decision in reply quality, not open rate. A clever subject line can lift opens, but a weak offer still gets you silence, vague “maybe later” replies, or quick brush-offs.

Different offers also attract different kinds of “yes.” An audit pulls people who want quick feedback. A pilot attracts buyers who are ready to try something. A template appeals to do-it-yourself teams. A benchmark report draws prospects who want context before they talk.

When you test offers, track outcomes that reflect real intent:

- Positive replies (clear interest, questions, requests for details)

- Meeting intent (availability, “can we talk?”)

- Qualified interest (right persona, real pain)

- Negative signals (not relevant, stop emailing, unsubscribes)

Offer tests go wrong when list quality changes between rounds. If one offer goes to better accounts or fresher data, it will look like the winner even if it isn’t. That creates false confidence and results you can’t repeat.

A simple example: you test a “free audit” on a clean list of mid-market companies with active hiring, then test a “pilot program” on a mixed list that includes tiny firms and old contacts. The audit “wins,” but you mostly tested list quality, not the offer.

Keep the goal tight: learn which offer earns the most positive replies from the same type of prospect.

The four offers and what they signal to prospects

When you change the offer, you change what the reader thinks you want from them. Not just money, but time, trust, and attention. A good test compares offers that feel meaningfully different while keeping the rest of the email steady.

Audit: low-commitment diagnosis

An audit is a “show me what’s wrong” offer. It works best when the scope is narrow and the output is concrete, like “a 1-page deliverability checklist” or “3 specific gaps in your follow-up sequence.”

The signal is low effort for them and moderate effort for you. It can build trust quickly because you’re offering insight before asking for a call. But if it’s vague (“free audit”), it can feel like a trap that leads straight into a pitch.

Pilot: a time-boxed trial

A pilot says, “Let’s try this in a small, safe way.” It can be paid or free, but it should be limited by time or size, like “2 weeks,” “one team,” or “one segment.” Say who qualifies so it doesn’t sound like you’re begging anyone to take it.

The signal is higher trust needed, but higher perceived value. A pilot implies you’ll do real work and produce results. It can also create friction if the next step feels heavy (contracts, setup, meetings).

Template: a ready-to-use asset

A template is “here’s something you can use today.” Think scripts, checklists, calculators, or a short playbook. Name the job it helps them do, not the file type, like “a 5-email reactivation sequence for dormant leads.”

The signal is fast value with very low effort. Templates can earn quick replies, but they can also attract freebie seekers. It helps to make the template specific to their role or situation.

Benchmark: a comparison report

A benchmark is “here’s how you compare.” It can be based on your dataset, public sources, or a small set of inputs they provide. What matters is the framing, like “average reply rate in your niche” or “common reasons prospects say no.”

The signal is credibility and authority, but also scrutiny. People will wonder where the numbers come from. If the data source is unclear, the offer can feel made up.

Across all four, you’re testing one thing: what promise feels worth a reply. Audit leans on clarity, pilot leans on commitment, template leans on speed, and benchmark leans on proof.

Keep list quality the same so the test is fair

The fastest way to get a fake “winner” is to send each offer to a different kind of prospect. An audit will look incredible if it goes to companies already shopping for help, while a template will look weak if it goes to people who never touch that problem.

Start with a tight audience and 1-2 personas. Keep it simple: one role you can name, one main pain, one reason they’d care right now.

Then lock your list source. Pick one place you’ll pull prospects from and don’t mix sources during the test. Different sources mean different freshness and accuracy, which can change results more than the offer itself.

Write down inclusion rules before you build the list and apply them to all four variants: role and seniority, industry, region/language, and company size. Clean the list the same way every time, too. Remove current customers, partners, and anyone you’ve had a real conversation with recently. Those people reply differently and can skew results.

Finally, randomize once and split evenly so each offer gets a similar mix of industries, sizes, and titles.

Decide what stays fixed and what you’ll measure

Offer tests only work when you treat them like a controlled experiment. If you change three things at once, you won’t know whether the audit “won” because it was better, or because it had a different sender name, a longer sequence, or a stronger ask.

Pick one primary metric. For most teams, the cleanest choice is positive reply rate: replies that clearly show interest, not just “thanks” or “stop.” It maps to intent and is less noisy than opens or clicks.

Add a few secondary metrics so you can spot hidden costs: total reply rate, meetings booked (or qualified calls), unsubscribes/spam complaints (if available), and bounce rate as a quick list-quality check.

Hold the “plumbing” constant across all four offers: same sender identity and sending domain, same sequence length and timing (for example, 3 steps over 7-10 days), same CTA style, same targeting rules, and similar daily volume so deliverability doesn’t swing.

What should change? Only the offer and a small piece of proof that supports it.

Create comparable emails for audit, pilot, template, benchmark

For a fair test, the emails should feel like siblings, not strangers. Write one base email and swap only the offer block.

Start with one core problem statement you can reuse word-for-word. Pick a problem that’s specific and painful, but common across the whole list. Keep this identical in all four versions so you’re testing the offer, not the framing.

Keep the opening pattern, CTA shape, length, tone, and sender identity consistent. Then write the offer-specific value in 1-2 sentences. Aim to make each offer equally easy to understand and similar in effort for the reader.

Example offer lines with the same structure:

- Audit: “I can run a quick audit of your follow-up emails and point out 3 specific gaps that are costing replies.”

- Pilot: “I can set up a small 2-week pilot with a limited segment so you can see results before committing.”

- Template: “I can share the exact follow-up template we use, adapted to your product and audience.”

- Benchmark: “I can send a short benchmark report showing what reply rates look like for similar teams, plus 2 practical fixes.”

Add one proof point per offer and keep it simple (delivery time, format, scope). Avoid stacking proof points until one variant looks stronger for reasons unrelated to the offer.

Keep the CTA identical in shape across all four versions. One clear question usually works best: “Worth me sending it over?” or “Should I send the draft?”

Step-by-step: run the offer test as one controlled experiment

Run it as one test, not four separate campaigns

Start with one prospect list, shuffle it, then divide it into four equal groups. Each group should look similar: same mix of roles, company sizes, industries, and geographies.

Assign one offer per group (audit, pilot, template, benchmark) and don’t change the assignment mid-test. If you “help” one offer by giving it better accounts, you’re no longer testing the offer.

A simple flow:

- Randomize the list and split into four equal buckets

- Map one offer to each bucket and label them

- Send the same sequence structure to all buckets (same steps, same delays)

- Freeze other experiments during the window

- End the test only after each bucket hits the planned sample size

Keep everything else fixed

Use the same sending domains and mailboxes, the same daily volume, the same sending hours, and the same follow-up cadence. Keep personalization level consistent, and keep the CTA format consistent.

Decide the stop rule before you start

Pick a minimum sample size per group and a time window, then stick to it. Stopping early because one offer “looks good” is how you trick yourself.

Aim to run at least one full sequence cycle so late replies count.

How to read results without fooling yourself

Offer tests often get “won” by the wrong email when teams focus on opens or total replies. Decide upfront what a good outcome looks like, then grade every reply the same way.

Define a positive reply in plain words. “Sounds interesting” isn’t the same as “Yes, book time.” If the definition changes mid-test, you’ll end up picking the offer you personally prefer.

A practical way to score replies:

- Qualified positive: asks for a call, pricing, timeline, or next steps

- Soft positive: interested but needs more info or later follow-up

- Neutral: out-of-office, auto-replies

- Negative: not interested, not relevant, stop emailing

- Redirect: points you to the right person

Track hidden negatives that can hurt future performance: unsubscribes, spam complaints, and angry replies.

Then compare results two ways: by offer and by persona segment. A template might work best for founders, while a benchmark report might resonate more with a marketing lead. If you only look at the total, you can miss a clear persona-specific winner.

When you pick the winner, weigh both volume and quality. If Offer A gets 12 positives but only 2 are qualified, while Offer B gets 8 positives with 6 qualified, Offer B is usually the better engine for meetings.

Example scenario: one list, four offers, one clear winner

Maya is an SDR selling HR software to mid-sized companies. She wants to test offers without changing list quality, so she pulls one clean segment: HR Directors at 200 to 1,000 person firms in retail and logistics. Same data source, same filters, same send volume.

She sticks to one pain point across all offers: HR teams lose hours each week chasing missing onboarding steps, and handoffs break when hiring spikes.

She frames the same pain four ways:

- Audit: “I can review your onboarding flow and point out where steps get missed (no prep needed, just a quick call).”

- Pilot: “Pick one location or one hiring manager and run a 14-day pilot to prove time saved.”

- Template: “I can share the onboarding checklist plus email templates we see working for similar teams.”

- Benchmark: “I can send a short benchmark comparing your process to peers (time-to-first-day, completion rate, drop-offs).”

After two weeks, open rates are similar, but replies aren’t. The Template wins on total positive replies and fastest time-to-meeting. The Audit gets polite curiosity but fewer calendar bookings. The Pilot attracts bigger companies but slows down in procurement questions. The Benchmark sounds “consultant-y” and draws more deflections.

She improves the winning sequence without restarting the whole test: email 1 offers the template, email 2 asks one qualifying question (“Is onboarding owned by HR or each department?”), and email 3 introduces the pilot only after someone engages.

Common mistakes that make offer tests useless

Most tests fail because they change too many things at once or pick a winner based on the wrong signal.

Common mistakes:

- Changing the offer and the subject line (or first line) together

- Giving one offer a better slice of the list (higher intent titles, cleaner data)

- Stopping early after a handful of replies

- Judging by opens or clicks instead of positive replies and meetings

- Ignoring unsubscribes, spam complaints, and deliverability damage

A simple fix: pick one primary success metric (positive replies that can lead to a meeting), track the downside (unsubscribes and complaints), and keep sending conditions consistent.

Also watch how replies get labeled. A messy inbox makes it easy to count “maybes” as wins.

Quick checklist and next steps

Before you hit send, make sure your test is actually a test:

- Split the list randomly and evenly

- Lock the constants (sender, cadence, follow-up count, CTA style)

- Define success metrics upfront

- Agree on what counts as a positive reply

- Sanity-check deliverability basics (authenticated domain, warmed mailbox, no broken tracking)

During the run, don’t tweak copy mid-flight. If you change the engine while driving, you won’t know what caused the result.

After the run, use a clear winner rule: highest positive reply rate, with enough volume to trust it, and acceptable unsubscribe/complaint levels.

If you want fewer moving parts while running tests like this, LeadTrain (leadtrain.app) puts domains, mailboxes, warm-up, sequences, and reply classification in one place so each offer variant can run under the same conditions.

FAQ

Why should I test the offer instead of only testing subject lines?

Test the offer when you care about replies and meetings, not just opens. A subject line can get attention, but the offer is what makes someone decide it’s worth responding and taking a next step.

How do I keep list quality the same across offer variants?

Start with one clean audience and one data source, then randomize the list once and split it evenly. Keep the same inclusion rules for every variant so you’re not accidentally testing “better accounts” versus “worse accounts.”

What’s the best metric to pick a winning offer?

Use positive reply rate as your primary metric, defined upfront in plain language. Then sanity-check with meeting intent, qualified replies, unsubscribes or complaints, and bounce rate so you don’t pick a “winner” that harms future sending.

What exactly should stay constant in an offer test?

Keep everything else the same: sender identity, sending domain, sequence length, timing, targeting rules, and volume. Only swap the offer block and a small, matching proof detail so the test isolates the offer itself.

How many emails or prospects do I need per offer to trust the result?

A practical default is one full sequence cycle with enough sends per variant to get a stable signal, not just a handful of replies. Set a minimum sample size and an end date before you start, and don’t stop early because one variant looks ahead.

How should I score replies so I don’t fool myself?

Write a simple scoring rule before you launch and apply it consistently. Treat “book time” and “send pricing” as real positives, and keep soft interest, out-of-office, and polite brush-offs in separate buckets so you don’t inflate results.

When should I use an audit vs a pilot vs a template vs a benchmark?

Pick the offer based on what kind of action you want next. Audits usually work when you can promise a concrete output quickly, pilots work when you can limit scope and risk, templates work when speed matters, and benchmarks work when your data story is credible and clear.

What if the “winning” offer also increases unsubscribes or angry replies?

Unsubscribes and complaints are a cost, not just noise, and they can reduce deliverability over time. If an offer wins on replies but also spikes negative signals, tighten targeting, clarify the promise, and soften the ask before scaling it.

How do I tell if results are skewed by deliverability or bad data?

Higher bounces often mean the list or source changed, not that the offer got worse. Pause scaling, clean and validate the data, and rerun the test with the same list rules so you can compare offers fairly.

What should I do after I find the best-performing offer?

Use the winner as your new control, then iterate one small change at a time, such as adding a qualifying question in follow-ups or adjusting the proof line. If you want consistency, using a platform like LeadTrain can help keep domains, warm-up, sequences, and reply classification uniform across variants while you test.