A/B test offers: a clean plan beyond subject lines

Learn a practical plan to A/B test offers, control variables, pick honest sample sizes, and read results without jumping to noisy conclusions.

Why offer A/B tests often give confusing results

Offer tests in cold outreach often feel random because the inbox is a messy place. You’re not testing in a lab. You’re testing against busy people, shifting priorities, spam filters, and lead lists that are never perfectly equal.

A common reason results feel confusing: people say they’re testing the offer, but they quietly change the copy too. If one version is shorter, clearer, more confident, or has a stronger call to action, you’re no longer learning about the offer. You’re learning about writing.

Even when you try to isolate it, the offer is tied to context. A “free audit” can look valuable to one segment and like extra work to another. If your two variants end up going to different job titles, company sizes, or industries, results swing and you blame the offer.

Most “dramatic winners” are just noise showing up as:

- Week-to-week swings because the prospects were different, not because the offer was better.

- Small samples where a few extra replies create a fake winner.

- Deliverability shifts (new domains, warm-up issues, authentication changes) that change who even sees the email.

- Timing effects like holidays, quarter-end, or an industry news cycle.

- Inconsistent reply handling, where one version gets more “not now” and you count it as success.

A familiar scenario: you run an offer test, Variant B gets 6 replies on Monday, and you declare it the winner. Then you realize those replies were mostly out-of-office and polite deferrals, and the rest of the week it goes quiet. That wasn’t magic. It was variance.

The goal isn’t certainty. A clean offer test reduces uncertainty so you can make a better bet. If you treat each test as evidence, not a final verdict, you stop chasing false winners and start building offers that hold up over time.

What counts as the offer (and what does not)

When people say they want to test offers, they often mean “change the email and see what happens.” That usually mixes too many variables. Start by defining the offer.

An offer is the trade you propose: what you ask the reader to do, what they get in return, and why it makes sense now.

The parts that are the offer

Think of the offer as a small package. Changing any of these changes the offer:

- CTA (the ask): “Reply YES,” “Pick a time,” “Send me the right contact,” “Want me to take a look?”

- Value angle (the promise): save time, reduce cost, get more leads, fix deliverability, improve conversion

- Incentive: free audit, template pack, benchmark report, gift card, extended trial

- Commitment level: “reply with a number” vs 5-minute call vs 30-minute demo

- Timing/urgency: “this week,” “before end of month,” “while we still have 3 slots”

Concrete examples of true offer variants:

- “Free 10-minute teardown of your outbound emails” vs “15-minute product demo”

- “7-day free trial” vs “one-page case study tailored to your company”

- “I’ll send a short list of quick wins” vs “I’ll build a mini plan for your next sequence”

What is not the offer

These can change results a lot, but they’re separate tests:

- Subject line and preview text

- Sender name, sending address, signature style

- Deliverability factors: domain age, mailbox warm-up, spam placement

- Audience mix: industry, seniority, lead source

- Send timing: day of week, time of day

If your goal is to learn which offer works, keep the non-offer items steady and change only the trade you’re proposing.

Choose one goal metric you can measure cleanly

If you want to test offers (not just subject lines), pick one primary success metric. One is enough. Multiple “main” metrics invite cherry-picking after the fact.

Choose the metric that matches the outcome you actually care about:

- Positive reply rate: any human reply that is not a bounce or unsubscribe

- Qualified interest rate: replies that show a real use case, not just “send info”

- Meetings booked rate: the cleanest business outcome, but slower to measure

- Cost per qualified reply: if you track spend per lead source

Whatever you pick, define labels so every reply is counted the same way. Write the rules down before you start. For example:

- “Interested” = asks about pricing, timeline, fit, or next steps

- “Not interested” = clear no

- “Out-of-office” = automatic deferral with no engagement

Also define your response window up front. A practical rule for cold email is to count replies that arrive within 7 to 10 days after the first send (or within 7 to 10 days of each step, if you’re comparing offers inside a sequence). Late replies happen, but they add noise and can favor whichever variant happened to run longer.

Avoid using opens and clicks as your primary offer metric. Opens are inflated by privacy features, and clicks can reflect curiosity rather than intent.

How to isolate the offer and keep everything else stable

Clean offer tests fail for one simple reason: two things change at once. If you want your results to mean anything, you need one clear difference between A and B, and everything else should be boringly consistent.

Keep the context fixed

Lock the context before you write a single line. The same people should receive A and B in the same way, during the same window. Otherwise you’re testing list quality, timing, or deliverability.

Keep these constant:

- List source and filters: same provider, same job titles, same company size

- Persona and use case: don’t mix founders with marketing managers in one test

- Sequence structure: same steps, same delays, same follow-up logic

- Sending schedule: same days, same hours, same daily volume limits

- Deliverability setup: same sending domain and mailbox health

Also match the email structure. If offer A is two short sentences and offer B is a long paragraph with extra proof, you changed more than the offer. Keep the format aligned: similar length, similar number of lines, same CTA shape.

Change one thing on purpose

Define each offer in one sentence, then edit only the minimum text needed to reflect the swap.

Example:

- Offer A: “15-minute audit with 3 fixes”

- Offer B: “free template pack plus a short walkthrough”

Keep the opener, the pain, and the tone the same. Only swap the trade.

If you discover an unrelated issue mid-test (broken personalization field, a bounce spike, a domain problem), don’t patch it and keep going. Pause, fix, then restart with a fresh split and a note of what changed. Otherwise you’ll blend “offer impact” with “incident impact.”

Step by step: set up the test from idea to launch

Start by making each offer simple enough to say in one sentence. If you can’t do that, you can’t test it.

Write two offers that differ in value, not just wording. For example:

- “Free 10-minute teardown of your outbound emails”

- “I’ll send a 3-slide plan to add 10 qualified meetings this month”

Then build two versions of the same sequence. Keep the structure identical: same number of steps, same send days, same personalization approach, same CTA format. Only change the offer line(s) where the value is presented.

A simple build plan:

- Draft Offer A and Offer B in one sentence each.

- Duplicate the sequence and change only the offer-related sentence(s).

- Use the same audience definition and the same list source.

- Split prospects 50/50 at random so each variant sees comparable people.

- Run both variants at the same time.

Set your rules before you send:

- Stop rule: a fixed end date or a fixed delivered sample size per variant.

- Success metric: the one metric you picked.

- Reply labels: the definitions you’ll use.

Before launch, do a final sanity check:

- Both versions ask for the same kind of reply.

- The only real difference is the offer.

- The split is random and simultaneous.

- The stop rule is written down and won’t change mid-run.

Sample size and timing that keep results honest

Small tests love to lie. With only a handful of replies, one extra “yes” (or one angry response) can swing your rates by 50% or more.

Practical numbers that usually behave

If you can, aim for 300 to 500 delivered prospects per variant. That’s often enough that a few random replies won’t crown a false winner.

If you can’t reach that volume:

- Don’t pretend you’re measuring tiny differences.

- Only trust big swings (for example, one offer getting roughly 2x the positive replies).

- Keep it to two variants. More versions spreads your volume too thin.

Timing matters as much as volume. Cold email performance changes by day of week, holidays, and inbox fatigue. If you run a test for two days, you might be measuring Monday vs Wednesday more than Offer A vs Offer B.

A safer minimum runtime is 7 full days. For slower-to-respond audiences (enterprise, founders, busy leaders), 10 to 14 days is often more realistic.

The biggest trap is peeking. If you check results daily and stop the moment one offer looks ahead, you’re selecting the winner at the noisiest point.

Pick a stop rule and stick to it:

- Fixed end date (example: 14 days), or

- Fixed delivered sample size (example: 400 delivered per variant)

If volume is low, adjust the plan instead of forcing a “clean” result. Run longer, test fewer things at once, and accept that you’re looking for obvious wins, not 5% lifts.

How to read results without overreacting

Start with the one metric you chose before launch. If the goal metric was “interested replies,” compare that first and ignore everything else for a moment. Mixing in extra metrics is how you talk yourself into a winner that isn’t real.

Then separate impact from confidence:

- An effect can be real but too small to matter (2.0% to 2.3% interested replies might not change pipeline).

- A big-looking lift on a small sample can still be shaky.

Before you declare a winner, check that the two groups were actually similar. Uneven distribution creates fake lifts, especially if one variant got more senior titles or more of a high-performing industry.

Quick sanity checks:

- Audience split: titles, company size, industry, region

- Timing: did one variant hit a holiday week or different send days?

- Deliverability signals: bounces and spam complaints

- Reply mix, not just reply count

Reply mix matters because “more replies” can mean “more objections.” If you can, review replies by category (interested, not interested, out-of-office, unsubscribe). A variant that increases “not interested” replies might just be clearer, not better.

When you wrap up the test, write a short decision note:

- What you believe right now (based on the primary metric)

- What you don’t know yet (sample size, skew, timing)

- What you’ll do next (roll out, rerun, or tighten the variation)

That keeps experimentation calm and repeatable when the numbers are close.

Common mistakes that create noisy conclusions

Most “failed” tests didn’t fail because the offer was bad. They failed because the test mixed signals.

Changing more than the offer is the top mistake. If you adjust the offer, the subject line, and the audience at the same time, any result is a blur.

Deliverability differences are the quiet killer. If Variant A goes out from a warmed setup and Variant B goes out from a new or recently changed domain or mailbox, you’re not testing the offer. You’re testing inbox placement. Lock your sending setup during the test.

Follow-up drift is another classic. You split Email 1 cleanly, then someone edits follow-up #2 for only one version, or changes the CTA. Now you’re comparing two different sequences, not two offers.

Other common noise sources:

- Mixing audiences so one variant gets cleaner leads or bigger accounts

- Sending on different days or at very different volumes

- Pausing one variant mid-run after early results look “good”

- Calling a winner because one big account replied (outliers swing small samples)

- Counting “replies” without separating interested vs not interested

Quick pre-launch checklist

Before you hit send, make sure you’re actually testing the offer, not a pile of small changes.

- One offer difference only. Decide the single change (audit vs demo, free trial vs report, low-friction CTA vs calendar ask). Keep the rest as close as possible.

- Same audience rules, same list source. Same lead source and filters for both variants.

- Same sequence and schedule. Same steps, timing, days, and volume.

- Written stop rule. Decide the end date or delivered sample size before launch.

- Clear reply labels. Define what counts as positive and what counts as qualified, including edge cases like “not now.”

Example: testing two offers in a cold email sequence

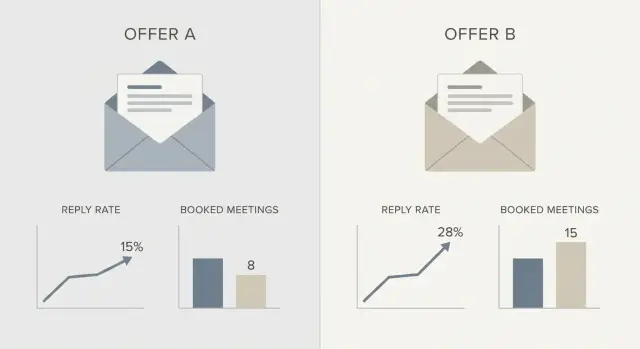

An SDR reaches out to finance leaders (VP Finance, Controller, Head of FP&A) at mid-market SaaS companies. The goal is to learn which offer earns more genuine interest, not which subject line gets more opens.

Two offers:

- Offer A: a 15-minute teardown of their outbound emails, followed by 3 specific fixes they can apply.

- Offer B: a short benchmark report comparing them to similar SaaS teams, followed by a 10-minute review call.

To isolate the offer, everything else stays the same: audience filters, sending setup, and email structure. Only the trade changes.

Keep constant across both variants:

- Lead list rules (role, company size, industry, geography)

- Copy skeleton (opening line, credibility line, CTA format, length)

- Personalization method (one sentence based on role or tech stack)

- Follow-up cadence (same steps, delays, send days)

- Sender identities and domain health

Split prospects 50/50 at the start of the sequence and run both at the same time.

Decide the winner using two numbers you can defend:

- Primary: interested rate (interested replies divided by delivered emails)

- Secondary: meetings booked, treated as slower feedback

If Offer A has a higher interested rate and leads to at least the same meetings booked after the same number of days, keep it and iterate. If one offer gets more replies but most are “not interested,” it’s probably attracting the wrong kind of attention.

Next steps: iterate calmly and make testing easier

Once you have a winner, treat it like a new default, not a trophy. Make that offer the baseline for the next round, then change only one offer angle at a time.

Keep a simple experiment log so you don’t repeat tests or misremember outcomes:

- Hypothesis

- Audience rules

- Dates and sample size

- Results (primary metric plus short notes)

- Decision (keep, revert, retest)

Before you judge any offer, confirm deliverability is steady. If inbox placement is wobbling because a mailbox is new, warm-up stopped, or authentication changed, fix that first.

If consistent measurement is a bottleneck for your team, an all-in-one platform can help by keeping setup and tracking in one place. For example, LeadTrain (leadtrain.app) combines sending domains, warm-up, multi-step sequences, and reply classification (interested, not interested, out-of-office, bounce, unsubscribe) so you can compare variants without a lot of manual sorting.

When deciding what to test next, pick the smallest offer change that answers a real question. If your current winner gets replies but few meetings, test commitment level instead of rewriting the whole pitch: lower-friction CTA vs calendar ask, audit vs 10-minute call, or the same offer with different proof.

Move one step at a time. Steady learning beats constant motion.

FAQ

Why do offer A/B tests in cold email feel so inconsistent?

Offer tests feel random when more than the offer changes or when A and B reach different kinds of prospects. Keep the audience, timing, sequence structure, and sending setup identical so the only meaningful difference is the trade you’re proposing.

What exactly counts as the “offer” in a cold email?

The offer is the trade: what you ask the reader to do, what they get, and why it’s worth doing now. Changing the CTA, incentive, commitment level, or urgency is changing the offer, even if most of the email stays the same.

What changes are not part of an offer test, even if they move the numbers?

Subject lines, sender name, email length, tone, proof points, timing, and deliverability are not the offer, but they can strongly affect outcomes. If you change any of those while “testing the offer,” you’ll end up learning about writing or inbox placement instead.

What’s the best success metric for an offer test?

Pick one primary metric that matches what you truly want, then stick to it for the whole test. For most teams, “qualified interest” or “meetings booked” is more useful than raw reply rate because it reduces the chance you reward empty or negative responses.

How should I label replies so results don’t get distorted?

Write simple label rules before you send, then apply them the same way to every reply. Decide upfront whether out-of-office, “not now,” or “send info” counts as success, so you don’t accidentally crown a winner based on inconsistent scoring.

How do I make sure Variant A and B reach comparable prospects?

Run both variants at the same time, split prospects randomly 50/50, and keep the list source and filters identical. If one variant gets more senior titles or a cleaner segment, you’re testing list mix, not the offer.

How many emails do I need per variant to trust the result?

Aim for roughly 300 to 500 delivered prospects per variant if you can, because small samples swing wildly. If you’re below that, only trust big differences and avoid adding extra variants that spread your volume too thin.

How long should I run an offer test before deciding?

Count replies in a fixed window like 7 to 10 days after the first send, and keep the total runtime long enough to cover normal day-to-day variation. Don’t stop early just because one version looks ahead on Monday; that’s often noise.

What should I do if deliverability or copy changes mid-test?

Pause and restart if something fundamental changes, like a deliverability issue, a broken personalization field, or edits to follow-ups in only one variant. If you patch mid-test and keep going, you’ll blend “offer impact” with “incident impact” and won’t know what caused the change.

How can LeadTrain help me run cleaner offer A/B tests?

Use a workflow that keeps sending setup stable, enforces identical sequences, and applies consistent reply categories across variants. LeadTrain is built around that idea by combining domains, mailboxes, warm-up, sequences, and automated reply classification in one place so you spend less time cleaning data and more time comparing the offer itself.